Migrating large corporate SharePoint intranets

- Approach (part I of IV)

- Analysis (part II of IV)

- Migration (part III of IV)

- Verification (part IV of IV)

Analysis

Your most valuable resource for information about the to-be-migrated environment will be the users itself. Getting to know who is working on the SharePoint site and how they are using it is vital for your analysis. Also use the opportunity to give insights about your migration strategy. Your second most valuable tool will be to use scripting in order to analyse the environment. I included the complete source-code of the scripts that I am using in this post.

When customizations become an application

Co-workers in the organization start to use SharePoint as a tool to facilitate daily work. Some used only by a few people and others used by a large group of people as a daily driver. These co-workers who created these customisation often are called ‘power users’ or ‘citizen developers’. Power users are focussed on solving business problems and less on topics such as performance, scalability, security, efficiency and product lifecycle.

What started as a small simple customization can eventually become a full blown business critical application with data exchanges to other line-of-business systems. These SharePoint applications will become harder to maintain over time and SharePoint boundaries will become impossible to solve without a complete redesign. Changes that are being applied lead to unavailability of the application. Often due to simple user errors or otherwise because the changes require hours to implement manually.

When you find such an application it is important to ask yourself the following questions.

- Is SharePoint still the right platform for this to run?

- Is the application still manageable?

- Are web parts / tooling / other parts still supported in the Microsoft roadmap?

If the answer of one of these questions is no you should consider to rebuild instead of migrate.

Finding customizations

Once you talked to the end-users and / or business owner of the SharePoint site(s) and explained the migration strategy it is time to use tooling in order to analyse the farm. A list of items to look for is:

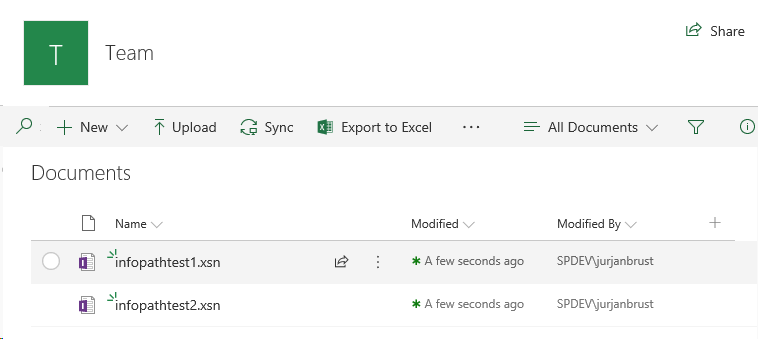

- InfoPath forms: this is a big indication of customization.

- JavaScript files: this is yet another big indication of customization.

- SharePoint Workflows: workflows are an indication for business processes.

- List information. List information gives insight into:

- item count.

- list base types such as KPI (key performance indicator). KPI is an excellent example of a problem when migrating to SharePoint online. This because SharePoint 2010 / 2013 KPI’s are deprecated. A redesign will be unavoidable.

- Large files: find files larger than destination limit : 250 is default for on premises.

- File versions: document versions can take up a lot of space, especially for non-office documents. track down these files and limit the number of versions if possible.

Other filetypes to look for are: CSS, XML, DOCM, ACCESS, HTML, XSL/T. Know which site-templates have been used and how permissions have been set and finally the content types used.

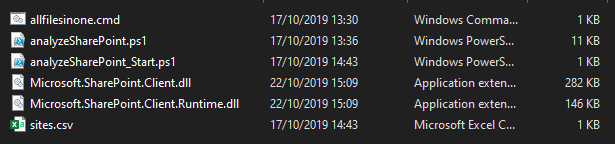

Folder structure

In the folder structure you will see all the files needed in order to do an analysis of the content in the SharePoint environment. All files displayed below will be explained in descending order.

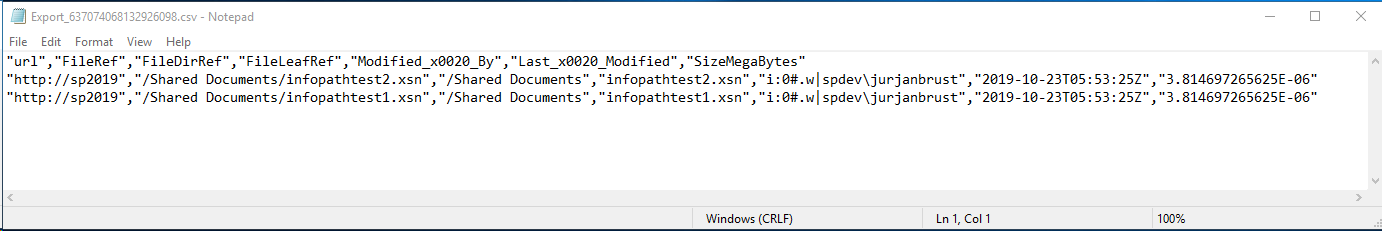

allfilesinone.cmd

The analyseSharePoint.ps1 script described below will generate a separate .csv file for every line in sites.csv. This .cmd will combine all these separate files into one combined.csv and only keep one column-header line. This allows the combines.csv to be opened in Excel. Excel is a great way to create PowerPivot tables and do all kinds of calculations on the data.

1@echo off

2

3ECHO Set working directory

4pushd %~dp0

5

6del combined.csv

7

8setlocal ENABLEDELAYEDEXPANSION

9

10REM set count to 1

11set cnt=1

12

13REM for each file that matches *.csv

14for /D %%d in (*) do (

15ECHO %%d

16for %%i in (%%d\*.csv) do (

17 REM if count is 1 it's the first time running

18 if !cnt!==1 (

19 REM push the entire file complete with header into combined.csv - this will also create combined.csv

20 for /f "delims=" %%j in ('type "%%i"') do echo %%j >> combined.csv

21 REM otherwise, make sure we're not working with the combined file and

22 ) else if %%i NEQ combined.csv (

23 REM push the file without the header into combined.csv

24 for /f "skip=1 delims=" %%j in ('type "%%i"') do echo %%j >> combined.csv

25 )

26 REM increment count by 1

27 set /a cnt+=1

28 )

29)analyseSharePoint_Start.ps1

This script is the script that is to be executed. It reads an comma separated file called ‘sites.csv’ that is containing all the URLs to analyse. Note that it is possible to analyse all the subsites by using only one URL (recursion).

Note: in order to allow the PowerShell to execute on your local machine you might need to change the execution policy (Set-ExecutionPolicy)

1# Copyright (C) www.jurjan.info - All Rights Reserved (MIT License)

2

3$input = [Array](Import-Csv -Path "sites.csv")

4

5# setup output directory

6$outputFolderName = ".\output"

7

8if(Test-Path $outputFolderName) {

9 Remove-Item $outputFolderName -Verbose -Recurse

10}

11New-Item -ItemType Directory -Force -Path $outputFolderName

12Write-Host "Creating directory $outputFolderName"

13

14foreach($line in $input)

15{

16 .\analyseSharePoint.ps1 -url $line.SiteUrl $outputFolderName

17}

analyseSharePoint.ps1

The main script that contains all the functions needed in order to analyse. I know that it is big, but it is also well structured and easy to extend. It is using SharePoint CSOM to connect and query the SharePoint farm. Change the following variable to change the type of analysis:

$function = [Options]::lists

1# Copyright (C) www.jurjan.info - All Rights Reserved (MIT License)

2

3Param(

4 [Parameter(Mandatory = $True, Position = 0)] [string]$url,

5 [Parameter(Mandatory = $True, Position = 1)] [string]$outputFolderName

6)

7

8$ScriptDir = Split-Path $script:MyInvocation.MyCommand.Path

9Add-Type -Path "$ScriptDir\Microsoft.SharePoint.Client.dll"

10Add-Type -Path "$ScriptDir\Microsoft.SharePoint.Client.Runtime.dll"

11

12$clientContext = New-Object Microsoft.SharePoint.Client.ClientContext($url);

13

14enum Options {

15 lists = 1

16 items = 2

17 workflows = 3

18 itemCount = 4

19 largeFiles = 5

20 versions = 6

21}

22

23function getQuery() {

24 Param (

25 [Parameter(Mandatory = $true, Position = 0)] [Options]$function,

26 [Parameter(Mandatory = $false, Position = 1)] [string]$searchPattern

27 )

28 if ($searchPattern -ne "") {

29 # query filenames containing $searchPattern

30 $query = "<View Scope='Recursive'>

31 <Query>

32 <Where>

33 <Contains>

34 <FieldRef Name='FileLeafRef' /><Value Type='Text'>$searchPattern</Value>

35 </Contains>

36 </Where>

37 </Query>

38 </View>"

39 }

40 else {

41 # query all list items

42 $query = "<View Scope='Recursive'>

43 <Query>

44 </Query>

45 </View>"

46 }

47

48 # find large files that exceed the 'configured' max size of the destination SharePoint farm.

49 if ($function -eq [Options]::largeFiles) {

50 $sizeInMB = 1024 * 1024 * 250

51 $query = "<View Scope='Recursive'>

52 <Query>

53 <Where>

54 <Gt>

55 <FieldRef Name='File_x0020_Size' /><Value Type='Text'>$sizeInMB</Value>

56 </Gt>

57 </Where>

58 </Query>

59 </View>"

60 }

61 return $query

62}

63

64# export listnames, itemcount and basetemplates. Also shows lastmodified date.

65function lists() {

66

67 $output = @();

68 $lists = $currentWeb.Lists

69 $clientContext.Load($lists)

70 $clientContext.ExecuteQuery()

71

72 foreach ($list in $lists) {

73 Write-Host "[" $list.ItemCount "] " -NoNewline

74 Write-Host $list.Title -ForegroundColor Yellow -BackgroundColor DarkGray -NoNewline

75 Write-Host " " $list.DefaultDisplayFormUrl -ForegroundColor Green -BackgroundColor DarkRed

76

77 $o = new-object psobject

78 $o | Add-Member -MemberType noteproperty -Name "Url" -value $url;

79 $o | Add-Member -MemberType noteproperty -Name "Web Title" -value $currentWeb.Title;

80 $o | Add-Member -MemberType noteproperty -Name "Web url" -value $currentWeb.ServerRelativeUrl;

81 $o | Add-Member -MemberType noteproperty -Name "Web creation date" -value $currentWeb.Created;

82 $o | Add-Member -MemberType noteproperty -Name "Web last item modified" -value $currentWeb.LastItemModifiedDate;

83 $o | Add-Member -MemberType noteproperty -Name "ListName" -value $list.Title;

84 $o | Add-Member -MemberType noteproperty -Name "List BaseType" -value $list.BaseType;

85 $o | Add-Member -MemberType noteproperty -Name "List BaseTemplate" -value $list.BaseTemplate;

86 $o | Add-Member -MemberType noteproperty -Name "List DefaultViewUrl" -value $baseUrl$($list.DefaultViewUrl)

87 $o | Add-Member -MemberType noteproperty -Name "List ItemCount" -value $list.ItemCount;

88 $o | Add-Member -MemberType noteproperty -Name "List LastItemModifiedDate" -value $list.LastItemModifiedDate;

89 $output += $o;

90 }

91

92 if ($null -ne $output) {

93 saveToFile $output

94 }

95}

96

97# track down workflows, workflows are a strong indication of user customizations

98function workflows() {

99

100 $output = @();

101 $lists = $currentWeb.Lists

102 $clientContext.Load($lists)

103 $clientContext.ExecuteQuery()

104

105 foreach ($list in $lists) {

106 $wfa = $list.WorkflowAssociations

107 $clientContext.Load($wfa)

108 $clientContext.ExecuteQuery()

109

110 foreach ($wf in $wfa) {

111 Write-Host "[" $wf.Name "]" -NoNewline

112 Write-Host $list.Title -ForegroundColor Yellow -BackgroundColor DarkGray -NoNewline

113 Write-Host $wf.Created -ForegroundColor Green -BackgroundColor DarkGray -NoNewline

114 Write-Host $wf.Modified -ForegroundColor Green -BackgroundColor DarkRed

115

116 $o = new-object psobject

117 $o | Add-Member -MemberType noteproperty -Name WorkflowName -value $wf.Name;

118 $o | Add-Member -MemberType noteproperty -Name Created -value $wf.Created;

119 $o | Add-Member -MemberType noteproperty -Name Modified -value $wf.Modified;

120 $o | Add-Member -MemberType noteproperty -Name ListTitle -value $list['Title'];

121 $o | Add-Member -MemberType noteproperty -Name ListURL -value $list.DefaultDisplayFormUrl;

122 $output += $o;

123 }

124 }

125

126 if ($null -ne $output) {

127 saveToFile $output

128 }

129}

130

131# general purpose function to find files with a matching $pattern in their filenames. Example: '.js', 'xsn'

132function listItems() {

133 Param (

134 [Parameter(Mandatory = $true, Position = 0)] [Options]$function,

135 [Parameter(Mandatory = $false, Position = 1)] [string]$searchPattern,

136 [Parameter(Mandatory = $false, Position = 2)] [string]$listBaseType

137 )

138

139 $output = @();

140 $lists = $currentWeb.Lists

141 $clientContext.Load($lists)

142 $clientContext.ExecuteQuery()

143

144 # optional filtering on base list type

145 if ($listBaseType -ne "") {

146 $lists = $lists | Where-Object { $_.BaseType -eq $listBaseType }

147 }

148

149 $query = New-Object Microsoft.SharePoint.Client.CamlQuery;

150 $query.ViewXml = getQuery $function $searchPattern

151

152 foreach ($list in $lists) {

153 $listItems = $list.GetItems($query);

154 $clientContext.Load($listItems);

155 $clientContext.ExecuteQuery();

156 $nrOfItemsFound = "{0:0000}" -f $listItems.Count

157

158 if ($listItems.Count -gt 0) {

159 Write-Host "[" $nrOfItemsFound "] " -NoNewline -ForegroundColor Green

160 }

161 else {

162 Write-Host "[" $nrOfItemsFound "] " -NoNewline

163 }

164

165 Write-Host $currentWeb.Title -ForegroundColor Yellow -BackgroundColor DarkGray -NoNewline

166 Write-Host " " -NoNewline

167 Write-Host $list.Title -ForegroundColor Yellow -BackgroundColor DarkGreen -NoNewline

168 Write-Host " " -NoNewline

169 Write-Host $list.DefaultDisplayFormUrl -ForegroundColor Green -BackgroundColor DarkRed

170

171 foreach ($listItem in $listItems) {

172 $o = new-object psobject

173 $o | Add-Member -MemberType noteproperty -Name url -value $url;

174 $o | Add-Member -MemberType noteproperty -Name FileRef -value $listItem['FileRef'];

175 $o | Add-Member -MemberType noteproperty -Name FileDirRef -value $listItem['FileDirRef'];

176 $o | Add-Member -MemberType noteproperty -Name FileLeafRef -value $listItem['FileLeafRef'];

177 $o | Add-Member -MemberType noteproperty -Name Modified_x0020_By -value $listItem.FieldValues.Modified_x0020_By

178 $o | Add-Member -MemberType noteproperty -Name Last_x0020_Modified -value $listItem.FieldValues.Last_x0020_Modified

179 $o | Add-Member -MemberType noteproperty -Name "SizeMegaBytes" -value $([convert]::ToInt32($listItem['File_x0020_Size'], 10) / (1024 * 1024))

180

181 # only execute if versions are to be analysed, because this is a timeconsuming operation

182 if ($function -eq [Options]::versions) {

183 $file = $listItem.File

184 $clientContext.Load($file)

185 $clientContext.ExecuteQuery();

186

187 $versions = $file.Versions

188 $clientContext.Load($versions)

189 $clientContext.ExecuteQuery();

190

191 $o | Add-Member -MemberType noteproperty -Name "Versions" -value $versions.Count;

192 #only output to file if there are multiple versions

193 if ($versions.Count -gt 0) {

194 $output += $o;

195 }

196 }

197 else {

198 $output += $o;

199 }

200 }

201 }

202 if ($null -ne $output) {

203 saveToFile $output

204 }

205}

206

207function saveToFile([object]$lines) {

208 $a = Get-Date

209 $lines | export-csv "$outputFolderName\Export_$($a.Ticks).csv" -noTypeInformation;

210}

211

212function export() {

213 Param (

214 [Parameter(Mandatory = $true, Position = 0)] [Options]$function,

215 [Parameter(Mandatory = $false, Position = 1)] [string]$searchPattern,

216 [Parameter(Mandatory = $false, Position = 2)] [switch]$recursive

217 )

218

219 Write-Host "[$($childWebs.Count) sub websites] [$($currentWeb.ServerRelativeUrl)]" -ForegroundColor Red -BackgroundColor Yellow

220

221 try {

222 switch ($function) {

223 ([Options]::items) { listItems -function $function -searchPattern $searchPattern; break }

224 ([Options]::largeFiles) { listItems -function $function -listBaseType "DocumentLibrary"; break }

225 ([Options]::versions) { listItems -function $function -listBaseType "DocumentLibrary"; break }

226 ([Options]::workflows) { workflows; break }

227 ([Options]::lists) { lists; break }

228

229 default {

230 Write-Host "Invalid function" -ForegroundColor Red

231 }

232 }

233 }

234 catch { Write-Host $_.Exception.Message -ForegroundColor Red }

235

236 if ($recursive) {

237 foreach ($web in $childWebs) {

238 $currentWeb = $web;

239 $childWebs = $currentWeb.Webs

240 $clientContext.Load($childWebs)

241 $clientContext.ExecuteQuery()

242 export $function $searchPattern $recursive;

243 }

244 }

245}

246

247$currentWeb = $clientContext.Web;

248$childWebs = $clientContext.Web.Webs

249$clientContext.Load($currentWeb)

250$clientContext.Load($childWebs)

251$clientContext.ExecuteQuery()

252

253$function = [Options]::lists # change this to the type of analysis to start

254switch ($function) {

255 #### infopath is a big indication of customization (use -recursive to incude all subsites)

256 ([Options]::items) {export -function ([Options]::items) -searchPattern ".xsn"}

257

258 #### javascript: javascipt is a big indication of customization (use -recursive to incude all subsites)

259 ([Options]::items) {export -function ([Options]::items) -searchPattern ".js"}

260

261 #### workflows: workflows are a big indication of customizations (use -recursive to incude all subsites)

262 ([Options]::workflows) { export -function ([Options]::workflows) }

263

264 #### lists information gives insight into

265 # - itemcount

266 # - list base types (for example kpi lists)

267 # (use -recursive to incude all subsites)

268 ([Options]::lists) { export -function ([Options]::lists) }

269

270 # large files: find files larger than destination limit : 250 is default for onprem (use -recursive to incude all subsites)

271 ([Options]::largeFiles) { export -function ([Options]::largeFiles) }

272

273 # versions: document versions can take up a lot of space, especially for non-office documents. track down these files and limit the number of versions if possible. (use -recursive to incude all subsites)

274 ([Options]::versions) { export -function ([Options]::versions) }

275}Microsoft.SharePoint.Client

The client DLLs included in this package are SharePoint 2010 assemblies. These can be replaced by the assemblies of other SharePoint versions.

See it in action

Download the complete solution here

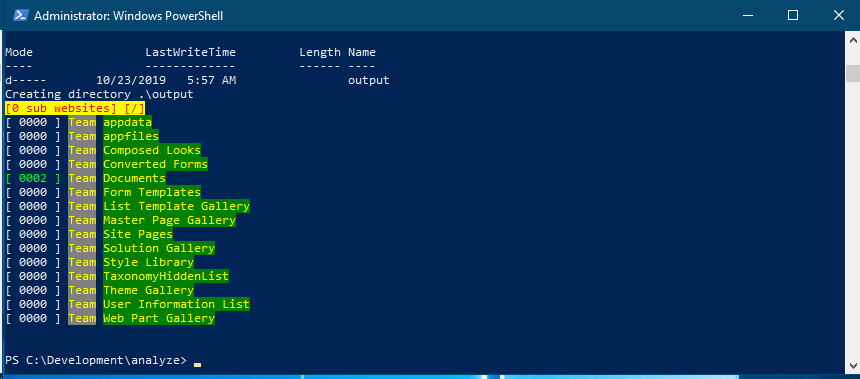

Just to give you an idea of the solution here are some screenshots:

Screenshot of a document library that contains some InfoPath files.

Screenshot of the script-output.

Screenshot of the generated .csv output